- •Preface

- •Biological Vision Systems

- •Visual Representations from Paintings to Photographs

- •Computer Vision

- •The Limitations of Standard 2D Images

- •3D Imaging, Analysis and Applications

- •Book Objective and Content

- •Acknowledgements

- •Contents

- •Contributors

- •2.1 Introduction

- •Chapter Outline

- •2.2 An Overview of Passive 3D Imaging Systems

- •2.2.1 Multiple View Approaches

- •2.2.2 Single View Approaches

- •2.3 Camera Modeling

- •2.3.1 Homogeneous Coordinates

- •2.3.2 Perspective Projection Camera Model

- •2.3.2.1 Camera Modeling: The Coordinate Transformation

- •2.3.2.2 Camera Modeling: Perspective Projection

- •2.3.2.3 Camera Modeling: Image Sampling

- •2.3.2.4 Camera Modeling: Concatenating the Projective Mappings

- •2.3.3 Radial Distortion

- •2.4 Camera Calibration

- •2.4.1 Estimation of a Scene-to-Image Planar Homography

- •2.4.2 Basic Calibration

- •2.4.3 Refined Calibration

- •2.4.4 Calibration of a Stereo Rig

- •2.5 Two-View Geometry

- •2.5.1 Epipolar Geometry

- •2.5.2 Essential and Fundamental Matrices

- •2.5.3 The Fundamental Matrix for Pure Translation

- •2.5.4 Computation of the Fundamental Matrix

- •2.5.5 Two Views Separated by a Pure Rotation

- •2.5.6 Two Views of a Planar Scene

- •2.6 Rectification

- •2.6.1 Rectification with Calibration Information

- •2.6.2 Rectification Without Calibration Information

- •2.7 Finding Correspondences

- •2.7.1 Correlation-Based Methods

- •2.7.2 Feature-Based Methods

- •2.8 3D Reconstruction

- •2.8.1 Stereo

- •2.8.1.1 Dense Stereo Matching

- •2.8.1.2 Triangulation

- •2.8.2 Structure from Motion

- •2.9 Passive Multiple-View 3D Imaging Systems

- •2.9.1 Stereo Cameras

- •2.9.2 3D Modeling

- •2.9.3 Mobile Robot Localization and Mapping

- •2.10 Passive Versus Active 3D Imaging Systems

- •2.11 Concluding Remarks

- •2.12 Further Reading

- •2.13 Questions

- •2.14 Exercises

- •References

- •3.1 Introduction

- •3.1.1 Historical Context

- •3.1.2 Basic Measurement Principles

- •3.1.3 Active Triangulation-Based Methods

- •3.1.4 Chapter Outline

- •3.2 Spot Scanners

- •3.2.1 Spot Position Detection

- •3.3 Stripe Scanners

- •3.3.1 Camera Model

- •3.3.2 Sheet-of-Light Projector Model

- •3.3.3 Triangulation for Stripe Scanners

- •3.4 Area-Based Structured Light Systems

- •3.4.1 Gray Code Methods

- •3.4.1.1 Decoding of Binary Fringe-Based Codes

- •3.4.1.2 Advantage of the Gray Code

- •3.4.2 Phase Shift Methods

- •3.4.2.1 Removing the Phase Ambiguity

- •3.4.3 Triangulation for a Structured Light System

- •3.5 System Calibration

- •3.6 Measurement Uncertainty

- •3.6.1 Uncertainty Related to the Phase Shift Algorithm

- •3.6.2 Uncertainty Related to Intrinsic Parameters

- •3.6.3 Uncertainty Related to Extrinsic Parameters

- •3.6.4 Uncertainty as a Design Tool

- •3.7 Experimental Characterization of 3D Imaging Systems

- •3.7.1 Low-Level Characterization

- •3.7.2 System-Level Characterization

- •3.7.3 Characterization of Errors Caused by Surface Properties

- •3.7.4 Application-Based Characterization

- •3.8 Selected Advanced Topics

- •3.8.1 Thin Lens Equation

- •3.8.2 Depth of Field

- •3.8.3 Scheimpflug Condition

- •3.8.4 Speckle and Uncertainty

- •3.8.5 Laser Depth of Field

- •3.8.6 Lateral Resolution

- •3.9 Research Challenges

- •3.10 Concluding Remarks

- •3.11 Further Reading

- •3.12 Questions

- •3.13 Exercises

- •References

- •4.1 Introduction

- •Chapter Outline

- •4.2 Representation of 3D Data

- •4.2.1 Raw Data

- •4.2.1.1 Point Cloud

- •4.2.1.2 Structured Point Cloud

- •4.2.1.3 Depth Maps and Range Images

- •4.2.1.4 Needle map

- •4.2.1.5 Polygon Soup

- •4.2.2 Surface Representations

- •4.2.2.1 Triangular Mesh

- •4.2.2.2 Quadrilateral Mesh

- •4.2.2.3 Subdivision Surfaces

- •4.2.2.4 Morphable Model

- •4.2.2.5 Implicit Surface

- •4.2.2.6 Parametric Surface

- •4.2.2.7 Comparison of Surface Representations

- •4.2.3 Solid-Based Representations

- •4.2.3.1 Voxels

- •4.2.3.3 Binary Space Partitioning

- •4.2.3.4 Constructive Solid Geometry

- •4.2.3.5 Boundary Representations

- •4.2.4 Summary of Solid-Based Representations

- •4.3 Polygon Meshes

- •4.3.1 Mesh Storage

- •4.3.2 Mesh Data Structures

- •4.3.2.1 Halfedge Structure

- •4.4 Subdivision Surfaces

- •4.4.1 Doo-Sabin Scheme

- •4.4.2 Catmull-Clark Scheme

- •4.4.3 Loop Scheme

- •4.5 Local Differential Properties

- •4.5.1 Surface Normals

- •4.5.2 Differential Coordinates and the Mesh Laplacian

- •4.6 Compression and Levels of Detail

- •4.6.1 Mesh Simplification

- •4.6.1.1 Edge Collapse

- •4.6.1.2 Quadric Error Metric

- •4.6.2 QEM Simplification Summary

- •4.6.3 Surface Simplification Results

- •4.7 Visualization

- •4.8 Research Challenges

- •4.9 Concluding Remarks

- •4.10 Further Reading

- •4.11 Questions

- •4.12 Exercises

- •References

- •1.1 Introduction

- •Chapter Outline

- •1.2 A Historical Perspective on 3D Imaging

- •1.2.1 Image Formation and Image Capture

- •1.2.2 Binocular Perception of Depth

- •1.2.3 Stereoscopic Displays

- •1.3 The Development of Computer Vision

- •1.3.1 Further Reading in Computer Vision

- •1.4 Acquisition Techniques for 3D Imaging

- •1.4.1 Passive 3D Imaging

- •1.4.2 Active 3D Imaging

- •1.4.3 Passive Stereo Versus Active Stereo Imaging

- •1.5 Twelve Milestones in 3D Imaging and Shape Analysis

- •1.5.1 Active 3D Imaging: An Early Optical Triangulation System

- •1.5.2 Passive 3D Imaging: An Early Stereo System

- •1.5.3 Passive 3D Imaging: The Essential Matrix

- •1.5.4 Model Fitting: The RANSAC Approach to Feature Correspondence Analysis

- •1.5.5 Active 3D Imaging: Advances in Scanning Geometries

- •1.5.6 3D Registration: Rigid Transformation Estimation from 3D Correspondences

- •1.5.7 3D Registration: Iterative Closest Points

- •1.5.9 3D Local Shape Descriptors: Spin Images

- •1.5.10 Passive 3D Imaging: Flexible Camera Calibration

- •1.5.11 3D Shape Matching: Heat Kernel Signatures

- •1.6 Applications of 3D Imaging

- •1.7 Book Outline

- •1.7.1 Part I: 3D Imaging and Shape Representation

- •1.7.2 Part II: 3D Shape Analysis and Processing

- •1.7.3 Part III: 3D Imaging Applications

- •References

- •5.1 Introduction

- •5.1.1 Applications

- •5.1.2 Chapter Outline

- •5.2 Mathematical Background

- •5.2.1 Differential Geometry

- •5.2.2 Curvature of Two-Dimensional Surfaces

- •5.2.3 Discrete Differential Geometry

- •5.2.4 Diffusion Geometry

- •5.2.5 Discrete Diffusion Geometry

- •5.3 Feature Detectors

- •5.3.1 A Taxonomy

- •5.3.2 Harris 3D

- •5.3.3 Mesh DOG

- •5.3.4 Salient Features

- •5.3.5 Heat Kernel Features

- •5.3.6 Topological Features

- •5.3.7 Maximally Stable Components

- •5.3.8 Benchmarks

- •5.4 Feature Descriptors

- •5.4.1 A Taxonomy

- •5.4.2 Curvature-Based Descriptors (HK and SC)

- •5.4.3 Spin Images

- •5.4.4 Shape Context

- •5.4.5 Integral Volume Descriptor

- •5.4.6 Mesh Histogram of Gradients (HOG)

- •5.4.7 Heat Kernel Signature (HKS)

- •5.4.8 Scale-Invariant Heat Kernel Signature (SI-HKS)

- •5.4.9 Color Heat Kernel Signature (CHKS)

- •5.4.10 Volumetric Heat Kernel Signature (VHKS)

- •5.5 Research Challenges

- •5.6 Conclusions

- •5.7 Further Reading

- •5.8 Questions

- •5.9 Exercises

- •References

- •6.1 Introduction

- •Chapter Outline

- •6.2 Registration of Two Views

- •6.2.1 Problem Statement

- •6.2.2 The Iterative Closest Points (ICP) Algorithm

- •6.2.3 ICP Extensions

- •6.2.3.1 Techniques for Pre-alignment

- •Global Approaches

- •Local Approaches

- •6.2.3.2 Techniques for Improving Speed

- •Subsampling

- •Closest Point Computation

- •Distance Formulation

- •6.2.3.3 Techniques for Improving Accuracy

- •Outlier Rejection

- •Additional Information

- •Probabilistic Methods

- •6.3 Advanced Techniques

- •6.3.1 Registration of More than Two Views

- •Reducing Error Accumulation

- •Automating Registration

- •6.3.2 Registration in Cluttered Scenes

- •Point Signatures

- •Matching Methods

- •6.3.3 Deformable Registration

- •Methods Based on General Optimization Techniques

- •Probabilistic Methods

- •6.3.4 Machine Learning Techniques

- •Improving the Matching

- •Object Detection

- •6.4 Quantitative Performance Evaluation

- •6.5 Case Study 1: Pairwise Alignment with Outlier Rejection

- •6.6 Case Study 2: ICP with Levenberg-Marquardt

- •6.6.1 The LM-ICP Method

- •6.6.2 Computing the Derivatives

- •6.6.3 The Case of Quaternions

- •6.6.4 Summary of the LM-ICP Algorithm

- •6.6.5 Results and Discussion

- •6.7 Case Study 3: Deformable ICP with Levenberg-Marquardt

- •6.7.1 Surface Representation

- •6.7.2 Cost Function

- •Data Term: Global Surface Attraction

- •Data Term: Boundary Attraction

- •Penalty Term: Spatial Smoothness

- •Penalty Term: Temporal Smoothness

- •6.7.3 Minimization Procedure

- •6.7.4 Summary of the Algorithm

- •6.7.5 Experiments

- •6.8 Research Challenges

- •6.9 Concluding Remarks

- •6.10 Further Reading

- •6.11 Questions

- •6.12 Exercises

- •References

- •7.1 Introduction

- •7.1.1 Retrieval and Recognition Evaluation

- •7.1.2 Chapter Outline

- •7.2 Literature Review

- •7.3 3D Shape Retrieval Techniques

- •7.3.1 Depth-Buffer Descriptor

- •7.3.1.1 Computing the 2D Projections

- •7.3.1.2 Obtaining the Feature Vector

- •7.3.1.3 Evaluation

- •7.3.1.4 Complexity Analysis

- •7.3.2 Spin Images for Object Recognition

- •7.3.2.1 Matching

- •7.3.2.2 Evaluation

- •7.3.2.3 Complexity Analysis

- •7.3.3 Salient Spectral Geometric Features

- •7.3.3.1 Feature Points Detection

- •7.3.3.2 Local Descriptors

- •7.3.3.3 Shape Matching

- •7.3.3.4 Evaluation

- •7.3.3.5 Complexity Analysis

- •7.3.4 Heat Kernel Signatures

- •7.3.4.1 Evaluation

- •7.3.4.2 Complexity Analysis

- •7.4 Research Challenges

- •7.5 Concluding Remarks

- •7.6 Further Reading

- •7.7 Questions

- •7.8 Exercises

- •References

- •8.1 Introduction

- •Chapter Outline

- •8.2 3D Face Scan Representation and Visualization

- •8.3 3D Face Datasets

- •8.3.1 FRGC v2 3D Face Dataset

- •8.3.2 The Bosphorus Dataset

- •8.4 3D Face Recognition Evaluation

- •8.4.1 Face Verification

- •8.4.2 Face Identification

- •8.5 Processing Stages in 3D Face Recognition

- •8.5.1 Face Detection and Segmentation

- •8.5.2 Removal of Spikes

- •8.5.3 Filling of Holes and Missing Data

- •8.5.4 Removal of Noise

- •8.5.5 Fiducial Point Localization and Pose Correction

- •8.5.6 Spatial Resampling

- •8.5.7 Feature Extraction on Facial Surfaces

- •8.5.8 Classifiers for 3D Face Matching

- •8.6 ICP-Based 3D Face Recognition

- •8.6.1 ICP Outline

- •8.6.2 A Critical Discussion of ICP

- •8.6.3 A Typical ICP-Based 3D Face Recognition Implementation

- •8.6.4 ICP Variants and Other Surface Registration Approaches

- •8.7 PCA-Based 3D Face Recognition

- •8.7.1 PCA System Training

- •8.7.2 PCA Training Using Singular Value Decomposition

- •8.7.3 PCA Testing

- •8.7.4 PCA Performance

- •8.8 LDA-Based 3D Face Recognition

- •8.8.1 Two-Class LDA

- •8.8.2 LDA with More than Two Classes

- •8.8.3 LDA in High Dimensional 3D Face Spaces

- •8.8.4 LDA Performance

- •8.9 Normals and Curvature in 3D Face Recognition

- •8.9.1 Computing Curvature on a 3D Face Scan

- •8.10 Recent Techniques in 3D Face Recognition

- •8.10.1 3D Face Recognition Using Annotated Face Models (AFM)

- •8.10.2 Local Feature-Based 3D Face Recognition

- •8.10.2.1 Keypoint Detection and Local Feature Matching

- •8.10.2.2 Other Local Feature-Based Methods

- •8.10.3 Expression Modeling for Invariant 3D Face Recognition

- •8.10.3.1 Other Expression Modeling Approaches

- •8.11 Research Challenges

- •8.12 Concluding Remarks

- •8.13 Further Reading

- •8.14 Questions

- •8.15 Exercises

- •References

- •9.1 Introduction

- •Chapter Outline

- •9.2 DEM Generation from Stereoscopic Imagery

- •9.2.1 Stereoscopic DEM Generation: Literature Review

- •9.2.2 Accuracy Evaluation of DEMs

- •9.2.3 An Example of DEM Generation from SPOT-5 Imagery

- •9.3 DEM Generation from InSAR

- •9.3.1 Techniques for DEM Generation from InSAR

- •9.3.1.1 Basic Principle of InSAR in Elevation Measurement

- •9.3.1.2 Processing Stages of DEM Generation from InSAR

- •The Branch-Cut Method of Phase Unwrapping

- •The Least Squares (LS) Method of Phase Unwrapping

- •9.3.2 Accuracy Analysis of DEMs Generated from InSAR

- •9.3.3 Examples of DEM Generation from InSAR

- •9.4 DEM Generation from LIDAR

- •9.4.1 LIDAR Data Acquisition

- •9.4.2 Accuracy, Error Types and Countermeasures

- •9.4.3 LIDAR Interpolation

- •9.4.4 LIDAR Filtering

- •9.4.5 DTM from Statistical Properties of the Point Cloud

- •9.5 Research Challenges

- •9.6 Concluding Remarks

- •9.7 Further Reading

- •9.8 Questions

- •9.9 Exercises

- •References

- •10.1 Introduction

- •10.1.1 Allometric Modeling of Biomass

- •10.1.2 Chapter Outline

- •10.2 Aerial Photo Mensuration

- •10.2.1 Principles of Aerial Photogrammetry

- •10.2.1.1 Geometric Basis of Photogrammetric Measurement

- •10.2.1.2 Ground Control and Direct Georeferencing

- •10.2.2 Tree Height Measurement Using Forest Photogrammetry

- •10.2.2.2 Automated Methods in Forest Photogrammetry

- •10.3 Airborne Laser Scanning

- •10.3.1 Principles of Airborne Laser Scanning

- •10.3.1.1 Lidar-Based Measurement of Terrain and Canopy Surfaces

- •10.3.2 Individual Tree-Level Measurement Using Lidar

- •10.3.2.1 Automated Individual Tree Measurement Using Lidar

- •10.3.3 Area-Based Approach to Estimating Biomass with Lidar

- •10.4 Future Developments

- •10.5 Concluding Remarks

- •10.6 Further Reading

- •10.7 Questions

- •References

- •11.1 Introduction

- •Chapter Outline

- •11.2 Volumetric Data Acquisition

- •11.2.1 Computed Tomography

- •11.2.1.1 Characteristics of 3D CT Data

- •11.2.2 Positron Emission Tomography (PET)

- •11.2.2.1 Characteristics of 3D PET Data

- •Relaxation

- •11.2.3.1 Characteristics of the 3D MRI Data

- •Image Quality and Artifacts

- •11.2.4 Summary

- •11.3 Surface Extraction and Volumetric Visualization

- •11.3.1 Surface Extraction

- •Example: Curvatures and Geometric Tools

- •11.3.2 Volume Rendering

- •11.3.3 Summary

- •11.4 Volumetric Image Registration

- •11.4.1 A Hierarchy of Transformations

- •11.4.1.1 Rigid Body Transformation

- •11.4.1.2 Similarity Transformations and Anisotropic Scaling

- •11.4.1.3 Affine Transformations

- •11.4.1.4 Perspective Transformations

- •11.4.1.5 Non-rigid Transformations

- •11.4.2 Points and Features Used for the Registration

- •11.4.2.1 Landmark Features

- •11.4.2.2 Surface-Based Registration

- •11.4.2.3 Intensity-Based Registration

- •11.4.3 Registration Optimization

- •11.4.3.1 Estimation of Registration Errors

- •11.4.4 Summary

- •11.5 Segmentation

- •11.5.1 Semi-automatic Methods

- •11.5.1.1 Thresholding

- •11.5.1.2 Region Growing

- •11.5.1.3 Deformable Models

- •Snakes

- •Balloons

- •11.5.2 Fully Automatic Methods

- •11.5.2.1 Atlas-Based Segmentation

- •11.5.2.2 Statistical Shape Modeling and Analysis

- •11.5.3 Summary

- •11.6 Diffusion Imaging: An Illustration of a Full Pipeline

- •11.6.1 From Scalar Images to Tensors

- •11.6.2 From Tensor Image to Information

- •11.6.3 Summary

- •11.7 Applications

- •11.7.1 Diagnosis and Morphometry

- •11.7.2 Simulation and Training

- •11.7.3 Surgical Planning and Guidance

- •11.7.4 Summary

- •11.8 Concluding Remarks

- •11.9 Research Challenges

- •11.10 Further Reading

- •Data Acquisition

- •Surface Extraction

- •Volume Registration

- •Segmentation

- •Diffusion Imaging

- •Software

- •11.11 Questions

- •11.12 Exercises

- •References

- •Index

9 3D Digital Elevation Model Generation |

385 |

with the operator W () wrapping values into the range of −π ≤ ϕ ≤ π . M and N refer to the image size in two dimensions. To find the minimum in Eq. (9.16) is equivalent to solving the following system of linear equations:

(φi+1,j − 2φi,j + φi−1,j ) + (φi,j +1 − 2φi,j + φi,j −1) = ρi,j |

(9.18) |

||

where |

|

. |

|

ρi,j = i,jx − ix−1,j + i,jy − i,jy |

−1 |

(9.19) |

|

Equation (9.18) represents a discretized version of Poisson’s equation [121]. The LS problem can be formulated as the solution of the set of linear equations:

Aφ = ρ |

(9.20) |

where A is an MN × MN sparse matrix, vector ρ contains values of wrapped phase, and vectors φ is the unwrapped values to be estimated. Although LS based methods are computationally very efficient when they make use of Fast Fourier transform (FFT) techniques, they are not very accurate because local errors tend to spread without means of limitation [53].

In practice, it is hard to operate the phase unwrapping process in a totally automated fashion in spite of vast investigation and research in this aspect of InSAR [151]. Human interventions are always needed. Therefore, in order to improve automation, phase unwrapping remains an active research area.

9.3.2 Accuracy Analysis of DEMs Generated from InSAR

The accuracy of the final DEMs generated from InSAR may be affected by many factors from SAR instrument design to image analysis. The major problems can be summarized as follows [1].

1.Inaccurate knowledge of acquisition geometry.

2.Atmospheric or ionospheric delays.

3.Phase unwrapping errors.

4.Decorrelation on land use types (scattering composition or geometry) which increases phase noise in the interferogram.

5.Layover or shadowing, which may directly affect interferometry.

For InSAR operational conditions, a critical baseline was defined for choosing SAR image pairs to generate an interferogram [112]. The concept of the critical baseline was introduced to describe the maximum separation of the satellite orbits in the direction orthogonal to both the along-track (azimuth) and the across-track (slant). If the critical value is exceeded, it would not be expected to have clear phase fringes in the interferogram. Toutin and Gray [151] indicated that the optimum baseline is terrain dependent; moderate to large slopes can generate a phase that can be difficult to process in the phase unwrapping stage and a baseline between a third and a half of the critical baseline is good for DEM generation, if terrain slope is moderate.

386 |

H. Wei and M. Bartels |

The final elevation error can be propagated from each variable presented in Eqs. (9.8), (9.14) and (9.15), as a function of H , B, α, r , and r (i.e. phase difference ψ ). Based on geometric errors caused by H , B, α, r , and ψ , the related elevation error can be calculated by partially differentiating these functions [126], as shown in Eq. (9.21).

δhH = δH

δhB = −r tan(θ − α)(sin θ + cos θ tan τ ) |

δB |

||||||

|

|

||||||

B |

|||||||

δhα = r(sin θ + cos θ tan τ )δα |

(9.21) |

||||||

δhr = − cos θ δr |

|

|

|

|

|

||

δh |

ψ = |

4π r(sin θ + cos θ tan τ ) |

δψ |

||||

|

|||||||

|

λB cos(θ |

− |

α) |

|

|

|

|

|

|

|

|

|

|||

where τ is the terrain surface slope in the slant range direction. The overall elevation error can be a sum of the errors presented in Eq. (9.21). To rectify errors in Eqs. (9.21), a useful way to identify errors is from the following three aspects.

1.Random errors. These are errors randomly introduced in the DEM generation procedures, such as, electronic noise of SAR instruments and interferometric phase estimation error. They cannot be compensated by tie points, which are the corresponding points where their corrected ground positions are known. The random errors affect the precision of the InSAR system, while the following errors determine the elevation accuracy.

2.Geometric distortion. These include altitude errors, baseline errors, and SAR internal clock timing errors (or atmospheric delay). They are systematic errors that may be corrected by tie points.

3.Position errors. These errors include orbit errors and SAR processing azimuthlocation errors and may be removed by a simple vertical or horizontal shift in the topographical surface.

From the above discussion, it can be seen that the elevation accuracy of DEMs generated from InSAR is influenced by various factors. Atmospheric irregularities affect the quality of SAR images and induce errors in the interferogram phase. Terrain types also contribute to the accuracy as scattering composition and geometry. This can be seen from Eq. (9.21) where the terrain surface slope along the slant range direction makes contributions to a few original errors, such as the original baseline error and phase error. Theoretically the interferometric nature used in DEM generation from InSAR gives the height accuracy to the level of the SAR wavelength (i.e. millimeters to centimeters), which match the phase difference. However this is hard to achieve in practice due to the errors mentioned above and the uncertainty introduced by imaging geometry and atmospheric irregularities (especially for repeat-pass interferometry). It was reported that atmospheric effects on a repeatpass InSAR could induce significant variations in an interferogram of 0.3 to 2.3 phase cycles. For an ERS interferogram with a baseline of 100 m, elevation errors of up to about 100–200 m can be caused by the atmospheric effects on DEMs [70].

9 3D Digital Elevation Model Generation |

387 |

Quantitative validation of DEMs generated from InSAR needs ground truth for comparison, such as GCPs or DEMs generated by higher accuracy instruments. As with DEM generation from optical stereoscopic imagery, RMSE and standard deviation can be used as metrics in accuracy evaluation. Reported elevation accuracy for the SRTM7 data [82], created using C-band InSAR, was 2–25 m, with a significant variation depending on the terrain and ground cover types (i.e. vegetation). When vegetation is dense, InSAR does not provide good DEM results, while there is only sparse/low vegetation, the elevation accuracy can achieve 2 m. Horizontal resolution of SRTM SAR data is typically about 90 m for most areas, which is much worse than 2–20 m resolution of optical satellite images. ERS-1 and ERS-2 were exploited in DEM generation by Mora et al. [113], and the reconstructed DEMs were compared with those from SRTM InSAR in the same areas with maximum difference of 35–60 m and standard deviation of 3–11 m. Normally ±10 m elevation accuracy is expected from SRTM InSAR generated DEMs [123].

Due to difficulties in meeting the geometric requirement to the coherent conditions for two complex SAR images in interferometry, InSAR is not yet as popular as its counterpart of stereoscopic imagery in DEM generation [88]. In comparison with satellite stereoscopic imagery, the major advantage of InSAR is that it is independent from weather conditions—SAR illuminates its target with the emitted electromagnetic wave and the antenna receives the coherent radiation reflected from the target. In areas where optical sensors fail to provide data, such as heavy cloud areas, InSAR can be used as a complementary sensing mode for global DEM generation.

9.3.3 Examples of DEM Generation from InSAR

The first example presented in this section is a DEM from SRTM data [123]. The parameters of the interferometer are listed in Table 9.2.

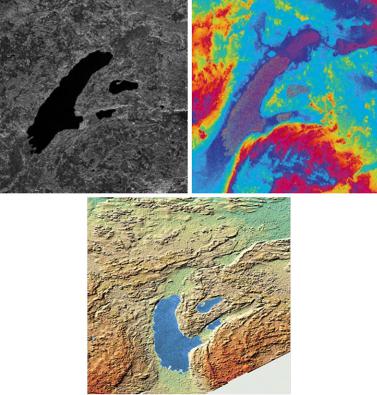

Figure 9.9 (top left) shows the magnitude (or amplitude) of SRTM raw data from the master antenna. The imaging area consists of 600 × 450 pixels. Each pixel of the image is a complex value of amplitude and phase as shown in Eq. (9.7). Figure 9.9 (top right) is the phase of the interferogram, and the reconstructed DEM is presented in Fig. 9.9 (bottom).

The processing of the SRTM InSAR data to the DEM is split into two parts: the InSAR data processing part and the geocoding part. The first part covers the steps necessary to get from the sensor raw data to the unwrapped phase, while the second part deals with precise geometric transformation from the sensor domain to map the cartographic coordinate space.

7SRTM: Shuttle Radar Topography Mission aimed to obtain DEMs on a near global scale from 56◦ S to 60◦N.

388

Table 9.2 Important parameters of the SRTM SAR interferometer

|

H. Wei and M. Bartels |

Wavelength (cm) |

3.1 |

Range pixel spacing (m) |

13.3 |

Azimuth pixel spacing (m) |

4.33 |

Range bandwidth (MHz) |

9.5 |

Range sampling frequency (MHz) |

11.25 |

Effective baseline length (m) |

59.9 |

Baseline angle (degree) |

45 |

Look angle at scene center (degree) |

54.5 |

Orbit height (km) |

233 |

Slant range distance at scene center (km) |

392 |

Height ambiguity per 2π fringe (m) |

175 |

1.InSAR data processing steps.

a.SAR focusing. When the site was selected, the corresponding SAR images need to go through focusing process. The physical reason for SAR image defocusing is the occurrence of unknown fluctuations in the microwave path length between the antenna and the scene [115]. For SAR focusing, several algorithms exist, and in this example, the chirp scaling algorithm was selected [124].

b.Motion compensation. Oscillations from the mounting device of SAR equipment introduces distortion to images. The oscillations can be filtered in the received signals before performing azimuth focusing. The SAR image after focusing and motion compensation is shown in Fig. 9.9(top left).

c.Co-registration and interpolation. Due to the Earth’s curvature, different antenna positions and delays in the electronic components, the two images may both be shifted and stretched with respect to each other to the order of a couple of pixels. In this example, instead of correlation methods, the image registration was conducted solely from the baseline geometry that was measured by the instruments of Attitude and Orbit Determination Avionics.

d.Interferogram formation and filtering. The interferogram is formed by multiplication and subsequent weighted averaging of complex samples of the master and slave images. The average corresponds to 8 pixels in azimuth and 2 pixels in slant range. It has proved that this combination provides the best tradeoff between spatial resolution and height error.

e.Phase unwrapping. The phase unwrapping was produced by a version of the minimum cost flow algorithm [36] and the computational time for the phase unwrapping process took about 5 minutes (on a parallel computer) for moderate terrain to several hours for complicated fringe patterns in mountainous regions.

2.Geocoding steps.

a.The unwrapped phase image is converted into an irregular grid of geolocated points of height, latitude and longitude on the Earth’s surface using WGS84 as horizontal and vertical reference.

9 3D Digital Elevation Model Generation |

389 |

Fig. 9.9 InSAR generated DEM of Lake Ammer, Germany. Top left: Magnitude of raw data. Top right: Interferogram phase. Bottom: DEMs from phase. Figure courtesy of [123]

b.The irregular grid is interpolated to a corresponding regular grid.

c.Slant range images are converted into their regular grid equivalents. The mapped DEM is demonstrated in Fig. 9.9(bottom).

The reconstructed DEM has horizontal accuracy, relative vertical accuracy, and absolute vertical accuracy of 20 m, 6 m and 16 m with LE90, respectively.

The second example is taken from a pair of ERS-1 SAR images of a mountainous region of Sardinia, Italy [37]. The image has 329 × 641 pixels. Figure 9.10(a) is the interferogram phase image, and Fig. 9.10(b) is the unwrapped phase image with the white points representing existing values. The phase unwrapping was done by a fast algorithm based on LS methods. It was claimed that the reconstructed DEM was in qualitative agreement with the elevation reported in geographic maps. Although the overall accuracy was not given because the published research was concentrated on the phase unwrapping algorithm, the computational time required for phase unwrapping process was achieved as 25 minutes on a Silicon Graphics Power Onyx RE2 machine for the mountainous region of Sardinia.